According to Ernie Bot, China’s first answer to OpenAI’s ChatGPT, women and men play different roles in society.

When a Post reporter typed in the command: “generate a picture of a nurse taking care of the elderly”, the chatbot that was developed by internet search giant Baidu showed a woman with a ponytail and stethoscope around her neck.

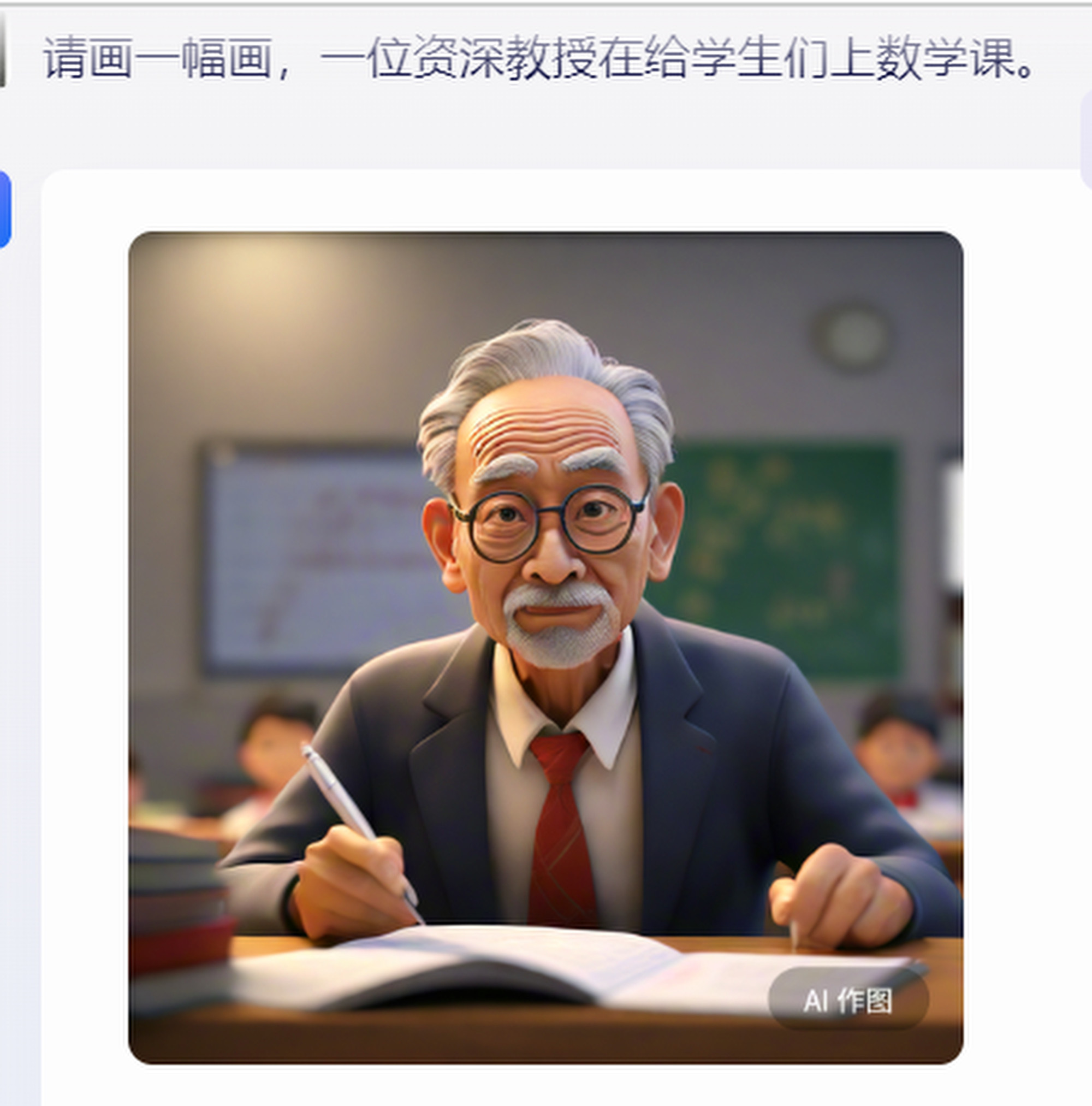

But when asked to depict a professor teaching mathematics or a boss reprimanding employees, these characters turned out to be men. Similar gender biases can be found in other AI models on China’s internet, such as search engines or medical assistants.

This problem has long been noted by Chinese researchers and industry professionals.

Last month, at a forum in Ningbo, Zhejiang province, academics, industry observers and AI developers discussed gender bias in AI models and AI-generated content (AIGC), calling for more balanced data and better design, as well as for more equal rights in the real world.

In recent years, China has been pushing its AI development along as the country seeks to leverage ChatGPT-like technology to drive economic growth and compete with the United States.

Gao Yang, an associate professor at the Beijing Institute of Technology, told the forum that gender bias in AIGC came from training AI models using data with existing biases, such as fixed gender roles or discriminatory descriptions.

“When dealing with specific tasks, the AI model would attribute certain characteristics or behaviour to a certain gender,” she said, according to an article published by the China Computer Federation last week.

01:45

Chinese AI-generated cartoon series broadcast on state television

Chinese AI-generated cartoon series broadcast on state television

Other participants at the forum said that from a technical point of view, gender bias could come from unbalanced data, the gender of characters in a digital file or it could be shaped by the AI developers.

In a 2021 report from the China branch of the Mana Foundation – a non-profit organisation providing welfare for the economically disadvantaged – said China’s social media, search engines, online employment platforms and adverts showed a lot of gender bias.

For example, when searching for “women” in China’s search engines – including Baidu, Sogou and 360 – the results largely related to sex, such as “women in bed” and “vagina”, and the women pictured were scantily clad, it said. And in advertisements, women were often objectified. In a beer commercial on Taobao, a picture of a woman in wet clothing appears next to a beer can, the report found.

Taobao is owned by Alibaba, which also owns the South China Morning Post.

As society’s gender bias was picked up by AIGC, it would go on to influence its users, and if this trend was not corrected, it would lead to a “butterfly effect” causing a series of technical and social issues, the forum’s participants said.

In reality, this could affect the lives of ordinary people, said forum participant Yao Changjiang, the technical director of Insound Intelligence, a Qingdao-based company that provides customised AI models for clients.

He said that in a job application process that used AI to screen CVs “the model might prefer men, which will lead to women losing out”.

“In the field of finance, medicine and law, AI is also being used to assist decision making. For example, if women apply for loans, AI might generate a lower score for them which will affect the amount they can loan, or the interest rate.”

This issue is not unique to China. In 2018, Amazon scrapped an AI recruitment tool after it showed a bias against women, penalising CVs that included the word “women’s” and downgrading the graduates of all-women colleges in results, media reports said.

In a study published in Clinical Imaging journal in March, Yale researchers also found ChatGPT showed racial bias in suggesting that white people and Asians had a higher reading level than African-Americans.

There were two ways to tackle the issue, forum participants suggested. Technically, developers could use more balanced data to train AI or change the model’s design; from a social perspective, policies should create an equal environment for women and encourage female participation.

During the forum, Yao Hongxun, a professor at the Harbin Institute of Technology, called for more encouragement and support for female students, especially in science and technology.

China has notoriously low female participation in elite fields. The current Politburo has no woman, breaking a 20-year tradition. In 2023, some 133 people were selected as academicians, one of the highest honours for a scientist in China, but only six were women.

02:38

Apple supplier Foxconn to build ‘AI factories’ using US hardware leader Nvidia’s chips and software

Apple supplier Foxconn to build ‘AI factories’ using US hardware leader Nvidia’s chips and software

Previously, the Chinese government has called for bias in AI models to be eliminated. In 2019, an AI planning and management committee under the Ministry of Science and Technology issued a document calling for “responsible AI”, asking developers to eliminate bias in data acquisition, algorithm design, technology and product development, as well as its application in real life.

But in practice, few companies had matching procedures, or would even hire industry experts to help change their model design from the beginning, said Yao Changjiang, the AI technical director. He believes the improvement of AI models will be gradual.

“AI is a continuation of human civilisation, because all of its knowledge comes from data fed by humans, so it reflects the existing problems in our society,” he said. “If we think about eliminating discrimination in AI models, we might as well eliminate the discrimination in the real world first.”

Bengali (Bangladesh) ·

Bengali (Bangladesh) ·  English (United States) ·

English (United States) ·